Artificial intelligence (AI) has grown exponentially over the past few decades, transitioning from theoretical frameworks to practical applications that influence every facet of modern life. This article explores the evolution of AI algorithms, tracing their journey from inception to the cutting-edge technologies of today.

The Dawn of AI: Early Beginnings

The origins of AI can be traced back to the mid-20th century, when computer scientists began exploring the potential for machines to simulate human intelligence. Alan Turing, a pioneer in computing, introduced the concept of a “universal machine” in 1936, laying the groundwork for modern computation. In 1950, Turing published the seminal paper “Computing Machinery and Intelligence,” which posed the now-famous question: “Can machines think?” The Turing Test, introduced in this paper, became a benchmark for evaluating machine intelligence.

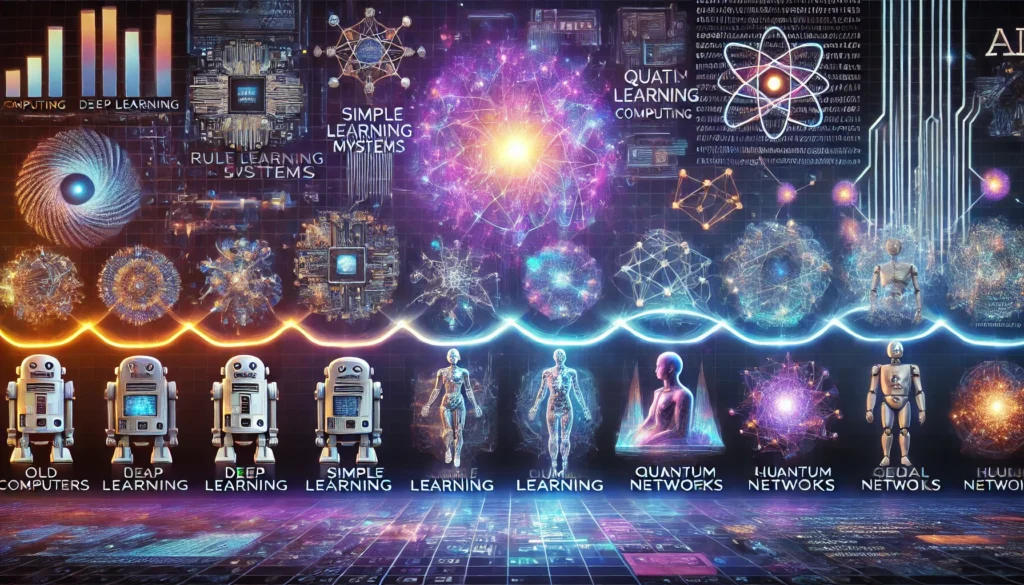

Early AI algorithms were rule-based systems, relying heavily on logical reasoning and symbolic computation. Researchers developed expert systems, such as DENDRAL and MYCIN, which used predefined rules to solve specific problems. These systems demonstrated the potential of AI but were limited by their reliance on human-defined rules and inability to learn from data.

Machine Learning: A Paradigm Shift

The 1980s marked a significant shift in AI with the emergence of machine learning (ML). Unlike rule-based systems, ML algorithms learn patterns from data, enabling them to make predictions or decisions without explicit programming. Key advancements during this era included:

- Neural Networks: Inspired by the structure of the human brain, neural networks gained popularity with the development of the backpropagation algorithm. Researchers like Geoffrey Hinton played a pivotal role in advancing this technology.

- Decision Trees and Ensemble Methods: Algorithms such as CART (Classification and Regression Trees) and random forests enabled more accurate predictions by combining multiple decision trees.

- Support Vector Machines (SVMs): Introduced in the 1990s, SVMs became a powerful tool for classification tasks by finding optimal hyperplanes to separate data points.

The Data Explosion and Big Data Era

The 2000s witnessed an explosion in data generation, driven by the rise of the internet, social media, and IoT devices. This “big data” era provided the fuel needed for AI algorithms to thrive. Key developments included:

- Deep Learning: Building on neural networks, deep learning introduced architectures with multiple layers, enabling the extraction of complex features from data. Convolutional Neural Networks (CNNs) revolutionized computer vision, while Recurrent Neural Networks (RNNs) excelled in sequence modeling tasks like natural language processing.

- Unsupervised Learning: Techniques like k-means clustering and principal component analysis (PCA) gained prominence, enabling the discovery of hidden patterns in unlabelled data.

- Reinforcement Learning: Inspired by behavioral psychology, reinforcement learning algorithms, such as Q-learning, allowed agents to learn by interacting with their environment.

The AI Renaissance: Modern Breakthroughs

Recent years have seen unprecedented advancements in AI, driven by improvements in computational power, data availability, and algorithmic innovation. Key milestones include:

- Transformer Models: The introduction of transformers, such as Google’s BERT and OpenAI’s GPT, revolutionized natural language understanding. These models leverage self-attention mechanisms to process vast amounts of text, enabling applications like chatbots, machine translation, and content generation.

- Generative AI: Algorithms like GANs (Generative Adversarial Networks) and diffusion models have enabled the creation of realistic images, videos, and audio. These technologies are used in fields ranging from entertainment to scientific research.

- Explainable AI (XAI): As AI systems become more complex, the need for transparency and interpretability has grown. XAI focuses on making AI decisions understandable to humans, addressing ethical and regulatory concerns.

Challenges and Future Directions

Despite remarkable progress, AI still faces challenges, including:

- Bias and Fairness: Ensuring that AI algorithms are unbiased and equitable is crucial to prevent perpetuating societal inequalities.

- Energy Efficiency: Training large models requires significant computational resources, raising concerns about environmental impact.

- Ethics and Regulation: As AI permeates society, establishing ethical guidelines and regulations is essential to ensure responsible use.

The future of AI holds immense promise. Emerging trends include:

- Edge AI: Deploying AI algorithms on edge devices for real-time processing and reduced latency.

- Quantum Computing: Harnessing quantum mechanics to solve problems beyond the reach of classical computers.

- AI-Augmented Intelligence: Combining human creativity with AI’s computational power to achieve unprecedented levels of innovation.

Conclusion

The evolution of AI algorithms is a testament to human ingenuity and perseverance. From rule-based systems to transformative technologies like deep learning and generative models, AI continues to reshape the world. By addressing current challenges and embracing future opportunities, AI has the potential to unlock a brighter and more inclusive future for humanity.